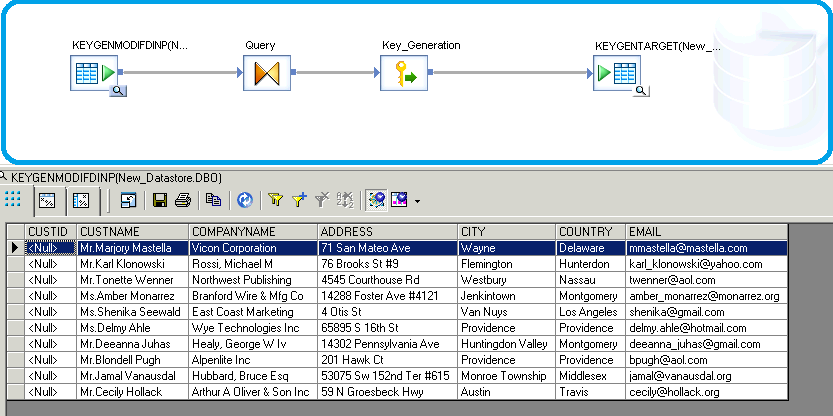

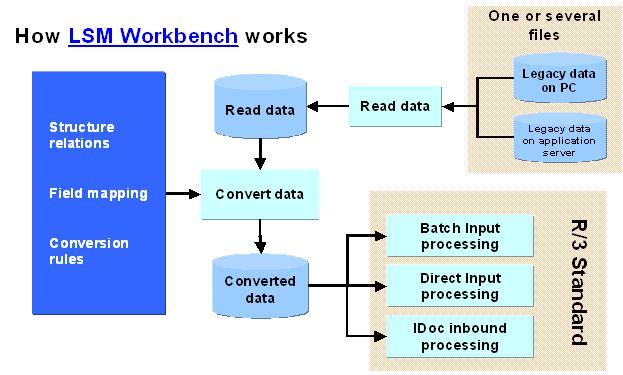

Key Generation Transformation is used to generate artificial keys for new rows in a table. The transform looks up the maximum existing key value of the surrogate key column from the table and uses it as the starting value to generate new keys for new rows in the input data set. The following table lists the names and descriptions of functions, as well as the function's category in the function wizard and smart editor. Note For information about operators, functions, and transforms that you can use as push-down functions with Data Services, see SAP Note 2212730. Aug 16, 2017 Then BODS provides a function called genrownumbygroup which allots ranking to incoming rows. But be wary of one thing when you use this function and that is, in layman language, all your incoming records should be properly arranged and in technical terms the column for which you’ll apply ranking should be ordered by first. SAP Data services is one of the finest ETL(Extract, Transform, Load) tools which delivers a single enterprise-class solution for data integration, data quality, data profiling, and text data processing that allows you to integrate, transform, improve, and deliver trusted data to critical business processes.

Description:

Date_Generation transform is used for creating time dimension tables. This transform generates a column which holds the date values based on the start & end dates provided as an input to the transform by considering the increment provided to it.

Here is the step by step guide to achieve the transformation.

Create a Project

Create a Batch Job

Take Work Flow and Data Flow

Take Date Generation Transformation

Double Click on Date_Generation Transform and Provide the details as below

Take the Query Transformation and join to the Date Generation Transformation

Go to Query Transform and Create New Output Column

Provide the Name for the Output Column to return the week in year

Go to Functions and select Date Functions then week_in_year function and click on Next

Select the Date_Generation Transform generated column then click on Finish

After mapping the week_in_year and create another New Output Column to Quarter of the Year

Give the name and data type for another output column then click on OK

Go to Functions and select Date Functions and the select quarter and click on Next

Select the Date_Generation transform generated column and click on OK

Click on Finish

Again create another output column return last date

Name the column and data type for the newly created output column

Go to Date functions and select last_date function then click on Next

Click on Finish

After mapping the all new ouput columns ,Validate the current window

Take the Template Table and join to the Query Transformation

Execute the Job

After executing the job check the data in the Target

Thanks for following.

Skip to end of metadataGo to start of metadataWhen you import a table with an Identity column it is treated like any other regular INT datatype column. And when you load it, an error is raised by the database: 'An explicit value kann not be inserted into an Identity column if IDENTITY_INSERT is set to OFF'.

But how can we load a table that has an Identity column?

First, simply do not set any column to type Identity. DI has the key generation transform to provide values for surrogate keys. It works similar to the identity column logic, take the highest key value and increment it by one. However, the Identity column checks the highest value for each and every row, Key Generation transform only once, at the beginning of the dataflow. So you cannot use the Key Generation if..

- two DI dataflows run in parallel loading the same table. Both will use the same key values.

- DI loading the table and at the same time some other application inserts data.

What is the problem with identity columns actually? DI adds them into the insert statement, even if they have a NULL value and SQL Server complaints. It wouldn't be a problem if SQL Server would look at the column value, figure there is a NULL value and hence ignore the column by itself. But no, the logic for SQL Server is: Identity column has to be omitted from the insert statement.

Actually, this can be accomplished in DI quite easily. DI does not base the insert/update/delete statement on the table schema, it does use the columns of the input schema. So all we have to do is to remove the identitiy column from the query before the table loader. And as this column is the physical primary key to the table, wher have to check the flag 'use input keys' in the table loader.

Just keep in mind, above works for insert statements only. In update statements the primary key column is not updated anyway, it is used in the where clause only. Hence, for deletes/updates not only is there no problem, but the key column is required there. That makes a dataflow with mixed inserts and updates quite ugly. We need two separate streams of data, updates go into the table unchanged, inserts are converted to normal rows so we can add a query downstream where the identity column is omitted.

A completely different approach is to do what the SQL Server error message advised us: To issue the command 'set IDENTITY_INSERT table ON'. This is a session command, so needs to be part of the session loading the data. A sql() function call cannot be used as this would be a session of its own. The only place where you can add that is the Preload Command in the table loader.

Sap Bods Jobs

Key Generation Function In Sap Bods Free

Neither of this solution is perfect. So long term a feature has to be implemented where the enduser simply can chose what method should be used, let DI generate the key or omit the identity column in inserts.